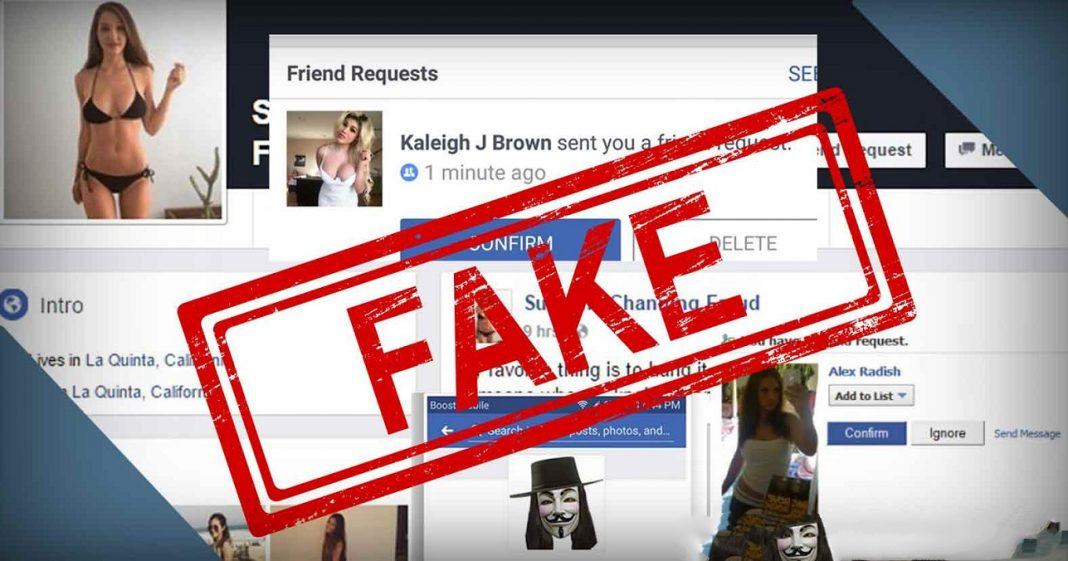

Social media giants like Facebook, Twitter and Instagram have to constantly fight fake accounts which crop up faster than a new Kardashian child. The fact that Mark Zuckerberg‘s Facebook has to delete accounts in the billions now says a lot, especially with the upcoming 2020 presidential election in America.

As part of their pledge of transparency, Facebook stated they had removed more than 3 billion fake accounts from October to March, twice as many as the previous six months, the company said Thursday.

Nearly all of them were caught before they had a chance to become “active” users of the social network.

In a new report, Facebook said it saw a “steep increase” in the creation of abusive, fake accounts. While most of these fake accounts were blocked “within minutes” of their creation, the use of computers to generate millions of accounts at a time meant not only that Facebook caught more of the fake accounts, but that more of them slipped through.

As a result, the company estimates that 5% of its 2.4 billion monthly active users are fake accounts, or about 119 million. This is up from an estimated 3% to 4% in the previous six-month report.

The new numbers were released Thursday in the company’s third Community Standards Enforcement report. Facebook will begin releasing this report quarterly starting next year, rather than twice a year, and start including Instagram.

“The health of the discourse is just as important as any financial reporting we do, so we should do it just as frequently,” CEO Mark Zuckerberg said on a call with reporters on Thursday about the report. “Understanding the prevalence of harmful content will help companies and governments design better systems for dealing with it. I believe every major internet service should do this.”

The increase shows the challenges Facebook faces in removing accounts created by computers to spread spam, fake news and other objectionable material. Even as Facebook’s detection tools get better, so do the efforts by the creators of these fake accounts.

In another blog post shared Thursday, Facebook VP of Analytics Alex Schultz explained some of the reasons behind the sharp increase in fake accounts. He said one factor is “simplistic attacks,” which he claims don’t represent real harm or even a real risk of harm. This often occurs when someone makes a hundred million fake accounts that are then taken down right away. Schultz said they are removed so fast that nobody is exposed to them and they aren’t included in active user counts.

The new numbers come as the company grapples with challenge after challenge, ranging from fake news to Facebook’s role in elections interference, hate speech and incitement to violence in the U.S., Myanmar, India and elsewhere.

Facebook also said Thursday that it removed 7.3 million posts, photos and other material because it violated its rules against hate speech. That’s up from 5.4 million in the prior six months.

The company said it found more than 65 percent of hate speech on its own, before people reported it, during the first three months of 2019. That’s an improvement from 52 percent in the third quarter of 2018.

Facebook is under growing pressure to combat hate on its platform, as material continues to slip through even with recent bans of popular extremist figures such as Alex Jones and Louis Farrakhan.

Facebook employs thousands of people to review posts, photos, comments and videos for violations. Some things are also detected without humans, using artificial intelligence. Both humans and AI make mistakes and Facebook has been accused of political bias as well as ham-fisted removals of posts discussing — rather than promoting — racism.

A thorny issue for Facebook is its lack of procedures for authenticating the identities of those setting up accounts. Only in instances where a user has been booted off the service and won an appeal to be reinstated does it ask to see ID documents.

While some have argued for stricter authentication on social media services, the issue is thorny. People including U.N. free expression rapporteur David Kaye say it’s important to allow pseudonymous speech online for human rights activists and others whose lives could otherwise be endangered.

Dipayan Ghosh, a former Facebook employee and White House tech policy adviser who is currently a Harvard fellow, said absent greater transparency from Facebook there is no way of knowing whether its improved automated detection is doing a better job of containing the disinformation problem.

“We lack public transparency into the scale of disinformation operations on Facebook in the first place,” he said.

And even if just 5 million accounts escaped through the cracks, Ghosh added, how much hate speech and disinformation are they spreading through bots “that subvert the democratic process by injecting chaos into our political discourse?”

“The only way to address this problem in the long term is for government to intervene and compel transparency into these platform operations and privacy for the end consumer,” he said.

Facebook CEO Mark Zuckerberg has called for government regulation to decide what should be considered harmful content and on other issues. But at least in the U.S., government regulation of speech could run into First Amendment hurdles.

And what regulation might look like — and whether the companies, lawmakers, privacy and free speech advocates and others will agree on what it should look like — is not clear.

Of the 3.4 billion accounts removed in the six-month period, 1.2 billion came during the fourth quarter of 2018 and 2.2 billion during the first quarter of this year. More than 99 percent of these were disabled before someone reported them to the company. In the April-September period last year, Facebook blocked 1.5 billion accounts.

Facebook attributed the spike in the removed accounts to “automated attacks by bad actors who attempt to create large volumes of accounts at one time.” The company declined to say where these attacks originated, only that they were from different parts of the world.

Starting with this report, Facebook is disclosing how it deals with the sale of “regulated goods” — that is, drugs and firearms. Facebook prohibits the purchase, sale or gifting of firearms, as well as drugs including marijuana, which is legal in some states and countries. The company said it “took action” on 1.5 million cases involving drugs and 1.4 million involving firearms. This generally means removing the material from Facebook but can also involve suspending users or adding warning screens to videos showing objectionable content.

The social media giant shared for the first time its efforts to crack down on illegal sales of firearms and drugs on its platform.

It said it increased its proactive detection of both drugs and firearms. During the first quarter, its systems found and flagged 83.3% of violating drug content and 69.9% of violating firearm content, according to the report. Facebook said this occurred before users reported it.

Facebook’s policies say users, manufacturers or retailers cannot buy or sell non-medial drugs or marijuana on the platform. The rules also don’t allow users to buy, sell, trade or gift firearms on Facebook, including parts or ammunition.

In the report, the company also shared how many content removals users appealed, and how much of it the social network restored. People have the option to appeal Facebook’s decisions, with the exception of content that is flagged for extreme safety concerns.

Between January and March, Facebook said it “took action” on 19.4 million pieces of content. The company said 2.1 million pieces of content were appealed. After the appeals, 453,000 pieces of content were restored.

Hate speech has been particularly challenging for Facebook. The company’s automated systems have a hard time identifying and removing hate speech, but that the technology is improving. The percentage of hate speech Facebook said it found proactively — meaning before users reported it — rose to 65.4% in the first quarter, up from 51.5% in the third quarter of 2018.

“What [AI] still can’t do well is understand context,” Justin Osofsky, Facebook VP of global operations, said on the call. “Context is key when evaluating things like hate speech.”

Osofsky also said Facebook will begin a pilot program where some of its content reviewers will focus on hate speech. The goal is for those reviewers to have a “deeper understanding” of how hate speech manifests and make “more accurate calls.”